Blog

Our BlogXLhub, Excel and Your SQL Server Data Warehouse

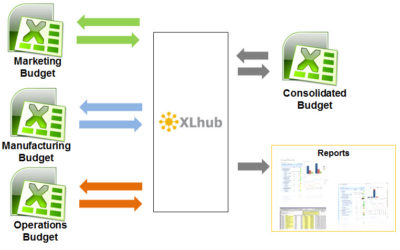

We recently came across an application of XLhub in a Data Warehouse. Here is the scenario: The contents of the dimension tables in a data warehouse need to be modified from time to time. For example, new Products have to be added to the Product dimension table or the...

Using Excel for Tracking Salary and Bonuses

Employee salary information is very confidential, yet many companies maintain salary and bonus information in Excel spreadsheets, and often email these files as attachments to large distribution lists. A simple oversight by someone could put this information in the...

Excel Aficionado Talks Excel and XLhub

Scott Maier is a faculty member and professor of journalism at the University of Orego. He has over 20 years experience as a wire-service reporter, and his “beats” have spanned from city hall to the state legislature, Latin America, as well as a variety of other news...

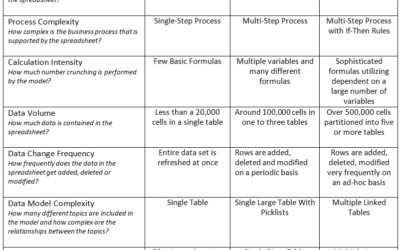

Spreadsheet Complexity Index

Here’s a tool for assessing the complexity of your Excel spreadsheet model. If your model is complex, as a spreadsheet design guideline we recommend separating these three key features of Excel: Reporting Calculation Data Storage The more tightly coupled are the...

The Spreadsheet Engineering Project

I would like to share some interesting information I learned from the Spreadsheet Engineering Project. This research project was undertaken at the Tuck School of Business at Dartmouth College. Professors Baker, Powell and Lawson conducted the research. Quoting from...

Where Did You Get Your Data?

Ever been through a PowerPoint presentation with impressive charts and tables explaining trends in your company’s operations? Did you start to wonder where is the data that was used to create the charts? The data (and the chart) probably came from and Excel...

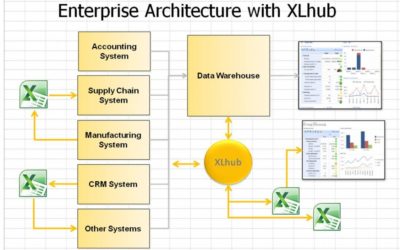

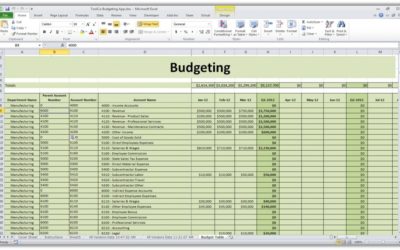

Excel, the Last Mile in Business Intelligence

As business intelligence consultants, we come across Microsoft Excel where ever we go. Excel is a fantastic tool. It is easy to learn, incredibly flexible and has powerful data management and business intelligence features. Our clients have sophisticated enterprise...